Building AI tools for client delivery has become one of my most frequent conversations lately and it's a different conversation than it was even a few months ago.

A friend said to me the other day that every other post on Instagram is an "AI expert" deploying a new agent or automating another piece of their delivery, and that the pressure is real.

She has 20 years of experience, a very strong brand and client portfolio - but the pressure got to her.

The reels about all the new tools newfound "AI Experts" share look impressive, which is in line with the "perfect lifestyle" feeling you normally get from scrolling over there. So it makes sense that somewhere in the middle of all that, a little dark thought knocks at your door:

If I don't build something soon, I'm going to get left behind.

Falling behind is one thing, but the deeper fear is about something different.

Fear is about your current clients waking up one day and realising they don't need you anymore.

I understand the pressure and the fear underneath it is real.

But what tends to get built in response to that fear - that's where things start going wrong.

1 of 4

The one question worth asking before you write a single prompt

Most coaches and consultants approach building AI tools for client delivery the same way they approach content: new trend is happening in the market, so let's move fast and figure out the details later.

So they build first and justify it later.

What gets skipped, before any system prompt is written, is this:

Can my client get this exact output by pasting into ChatGPT or Claude on a Sunday afternoon?

If the answer is yes, the tool isn't raising your perceived value. It's eroding it. You've automated something the market already provides for free, wrapped it in your branding, and positioned it as a feature.

That's not differentiation. That's commoditization with extra steps.

I flagged this same trap from the other side in the 11PM Retention Test - don't become expensive AI.

The question sounds simple. It's actually ruthless - because most tools built in a panic fail it on the first pass, and coaches usually don't ask it until after the time and energy to "learn to vibecode" has already been spent.

2 of 4

When the branding is doing all the work

A lot of tools feel like they use your methodology. They use your language, reference your frameworks, and the outputs sound like you wrote them.

But try something: strip out the branding. Remove the familiar phrases. Ask whether a stranger reading this output (with no context) would need your specific approach to produce it, or whether it's well-written general advice dressed up in your vocabulary.

If it's the latter, the branding is doing all the work. The tool looks proprietary, but the output isn't.

This matters because clients work it out. Not consciously, and not immediately. But over time, the tool stops feeling like access to something only you can provide and starts feeling like a familiar interface for things they could find elsewhere.

The perceived value drifts, and you lose clients to slow drift more often than you lose them to anything dramatic.

3 of 4

Three filters before you build anything

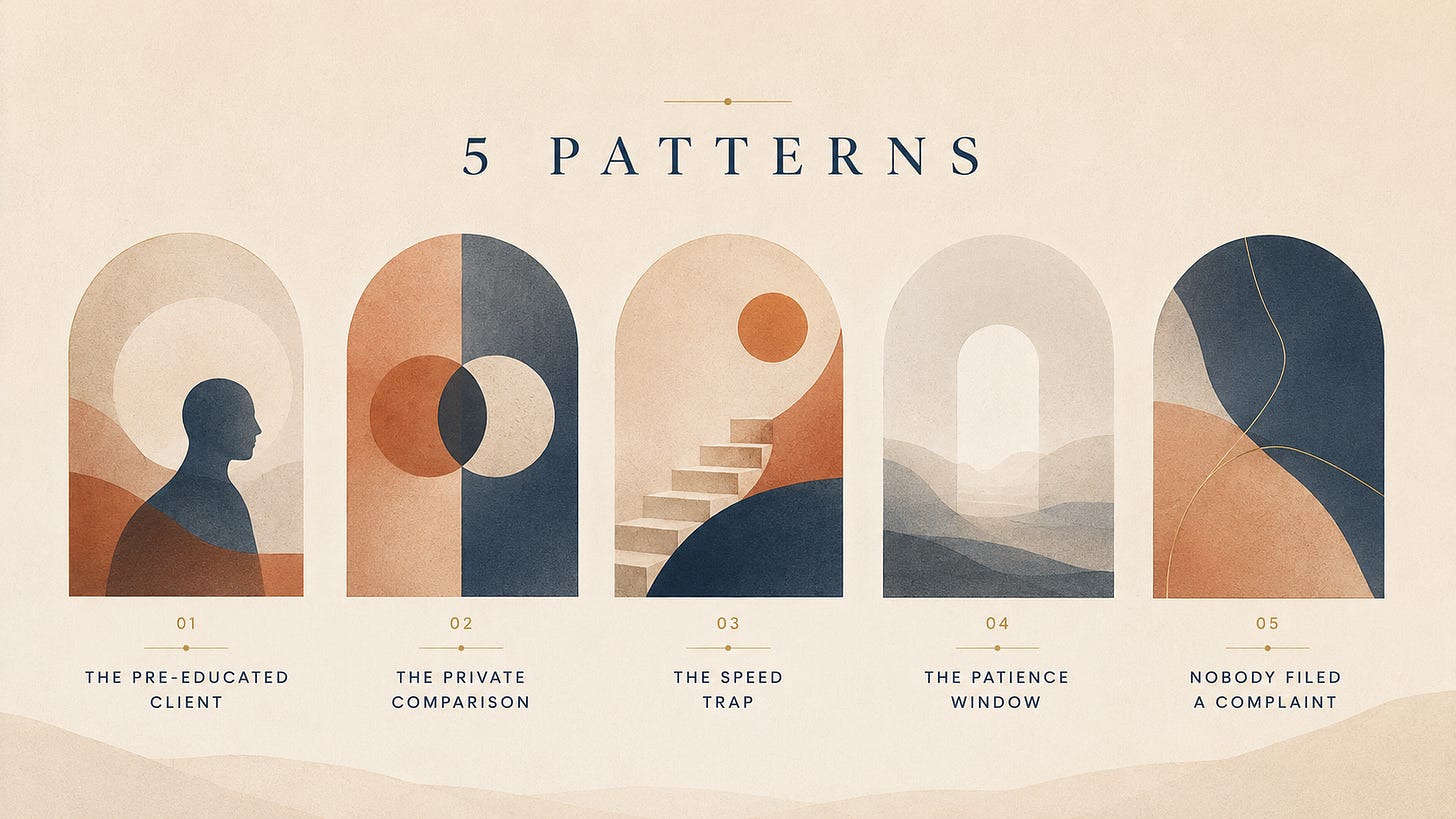

I run every tool idea through three questions before touching a system prompt.

A) Does this require my methodology to produce the output?

Not my language - my actual diagnostic logic. The specific failure patterns I've named, the framework lens that only exists inside my system. If a generic AI with none of my IP could produce something functionally identical, the methodology isn't doing load-bearing work. It's decoration.

B) Does this require my clients' accumulated data?

There's a category of tools that become genuinely hard to replicate over time - not because the AI is smarter, but because the data behind it took months to build. Client history, session patterns, what this specific person has tried and where they lost momentum. That context doesn't exist in a ChatGPT session. It accumulates, and that's what makes it defensible.

C) Does this require my ongoing judgment?

Some tools hold their value because they're a conduit for decisions only I can make. The moment a tool runs without my judgment embedded in it, the clock starts ticking on how distinctive it actually feels.

If a tool passes none of the three - it's a feature, not a defensible asset.

A feature is fine, just don't position it as differentiation and don't build it in response to extinction fear.

4 of 4

What I just added to the FlowOS Lab

I built a tool specifically for this problem.

The AI Opportunity Explorer is the newest addition inside FlowOS Lab. It takes your current delivery context - what your clients actually do, where they lose momentum, what they ask for most - and maps where AI would compound your perceived value versus where it would quietly flatten it.

It's the conversation I'm having with coaches every week, built into a 5-7 minute session.

You answer a few questions about what clients experience inside your program. It tells you which tools are worth building, which ones will commoditize you, and which ones only hold up if your specific methodology is embedded inside them.

Once you have those ideas shaped, it's a lot easier to have the "to build or not to build?" conversation with clarity instead of pressure.

I know the pressure is real - we can all feel it. But it makes all the difference in the world to approach it with childlike curiosity, because if you have the expertise, the brand, and the clients, I'm almost certain you're already sitting on a valuable idea.

If you'd like a structured way to pressure-test which AI tool is actually worth building inside your delivery, that's exactly what The Gameplan is for - a 90-minute 1:1 retention diagnostic with a guided FlowOS pre-call session and a written 60-90 day action plan across the three blocks (Momentum, Founder, Upgrade). if you want to talk through it first.

Time to run back to The Lab now. Talk soon in Letter 026.

-Filip "to build or not to build?" Sardi

Frequently Asked Questions

Should coaches build AI tools for client delivery?

Only if the tool requires your methodology, your accumulated client data, or your ongoing judgment to produce its output. If a client could paste the same prompt into ChatGPT on a Sunday afternoon and get a comparable result, the tool isn't raising your perceived value - it's eroding it. Building from extinction fear usually produces features dressed up as differentiation. Build from clarity instead, after running the idea through three filters.

What's the one question to ask before building an AI tool for clients?

Can my client get this exact output by pasting into ChatGPT or Claude on a Sunday afternoon? If yes, you've automated something the market already provides for free, wrapped it in your branding, and positioned it as a feature. That's commoditization with extra steps. The question is ruthless because most tools built in a panic fail it on the first pass - and most coaches don't ask it until after the time and energy to learn to vibecode has already been spent.

Why does branded AI output stop feeling proprietary over time?

Strip the branding from the output. Remove the familiar phrases. If a stranger reading it - with no context - could produce something equivalent without your specific approach, the branding is doing all the work. Clients work this out, not consciously and not immediately, but over time. The tool stops feeling like access to something only you can provide and starts feeling like a familiar interface for things they could find elsewhere. Perceived value drifts, and clients leave to slow drift more often than to anything dramatic.

What are the three filters for a defensible AI tool?

First, does it require your methodology - the specific diagnostic logic, failure patterns, and framework lens that only exist in your system? Second, does it require your clients' accumulated data - the history, session patterns, and context that took months to build and doesn't exist inside a fresh ChatGPT session? Third, does it require your ongoing judgment - decisions only you can make, embedded inside the tool? If a tool passes none of the three, it's a feature, not a defensible asset.

What is the AI Opportunity Explorer inside FlowOS Lab?

It's a 5-7 minute session built into FlowOS Lab. You answer a few questions about what your clients actually do, where they lose momentum, and what they ask for most. The tool maps where AI would compound your perceived value versus where it would quietly flatten it. It tells you which tools are worth building, which ones will commoditize you, and which ones only hold up if your specific methodology is embedded inside them.

Client Flow Letter

If this was useful, the next one will be too.

Retention strategy for coaches and founders — every week. No filler.